Combined Ultrasound (US) and Photoacoustic (PA) Simulations

Combined ultrasound and photoacoustic (USPA) imaging has attracted several clinical applications due to its ability to simultaneously display structural and molecular information of deep biological tissue in real time. Here, we developed a hybrid USPA simulation platform by integrating finite element models of light and ultrasound propagation. The platform allows optimization of device design parameters, such as the aperture size and frequency of light source and ultrasound detector arrays. In addition, the potential of this simulation platform to generative massive USPA datasets aiding the data driven deep-learning enhanced USPA imaging has been validated using both simulations and experiments.

Figure below: (a-c) Show heterogeneous prostate phantom. (d-h) Flowchart for simulating US and PA images using NIRFast for optical and K-wave for acoustic simulations. (i-k) Simulated B-mode US, PA and co-registered US+PA image.

Figure below: (a-c) Show heterogeneous prostate phantom. (d-h) Flowchart for simulating US and PA images using NIRFast for optical and K-wave for acoustic simulations. (i-k) Simulated B-mode US, PA and co-registered US+PA image.

Publications:

- Agrawal, S., Dangi, A. and Kothapalli, S.R., 2020. Modeling Combined Ultrasound and Photoacoustic Imaging: Simulations aiding Device Development and Deep Learning. Photoacoustics (Accepted). doi: https://doi.org/10.1101/2020.11.07.371930

- Agrawal, S., Dangi, A., Frings, N., Albahrani, H., Ghouth, S.B. and Kothapalli, S.R., 2019, February. Optimal design of combined ultrasound and multispectral photoacoustic deep tissue imaging devices using hybrid simulation platform. In Photons Plus Ultrasound: Imaging and Sensing 2019 (Vol. 10878, p. 108782L). International Society for Optics and Photonics. doi: https://doi.org/10.1117/12.2510869

Machine Learning Approach for Unmixing Deep Tissue Photoacoustic Signals

Noninvasive mapping of chromophore distribution in deep tissue is highly desired in numerous biomedical applications. Multispectral photoacoustic (PA) imaging fused with linear spectral unmixing is employed to generate 2-D and 3-D maps of tissue chromophores. However, wavelength and depth dependent attenuation of optical fluence leads to nonuniform variations in the spectral behavior of biomolecules affecting the accuracy of conventional linear unmixing methods. To address this, a modified independent component analysis (ICA) model is proposed to construct adaptive and statistically independent components for each chromophore from the given spectral PA data. End-to-end unsupervised nature of the proposed approach made the process independent of human labeling and outperformed the standard linear spectral unmixing method when tested on a set of simulated phantoms, experimental phantoms, and in vivo mouse data.

Figure below: In vivo validation. (a) Mouse bearing subcutaneous prostate tumor. Tumor region circled yellow. PA images at four representative wavelengths acquired before (b)–(e) and after (h)–(k) intravenous ICG. Modified ICA and linear unmixing results obtained before (f), (g) and after (l), (m) injecting ICG. White arrows highlight improved H b detection with the modified ICA.

Figure below: In vivo validation. (a) Mouse bearing subcutaneous prostate tumor. Tumor region circled yellow. PA images at four representative wavelengths acquired before (b)–(e) and after (h)–(k) intravenous ICG. Modified ICA and linear unmixing results obtained before (f), (g) and after (l), (m) injecting ICG. White arrows highlight improved H b detection with the modified ICA.

Publications:

- Agrawal, S., Gaddale, P., Karri, S.P.K. and Kothapalli, S.R., 2021. Learning Optical Scattering Through Symmetrical Orthogonality Enforced Independent Components for Unmixing Deep Tissue Photoacoustic Signals. IEEE Sensors Letters, 5(5), pp.1-4. doi: 10.1109/LSENS.2021.3073927

- Durairaj, D.A., Agrawal, S., Johnstonbaugh, K., Chen, H., Karri, S.P.K. and Kothapalli, S.R., 2020, February. Unsupervised deep learning approach for photoacoustic spectral unmixing. In Photons Plus Ultrasound: Imaging and Sensing 2020 (Vol. 11240, p. 112403H). International Society for Optics and Photonics. doi: https://doi.org/10.1117/12.2546964

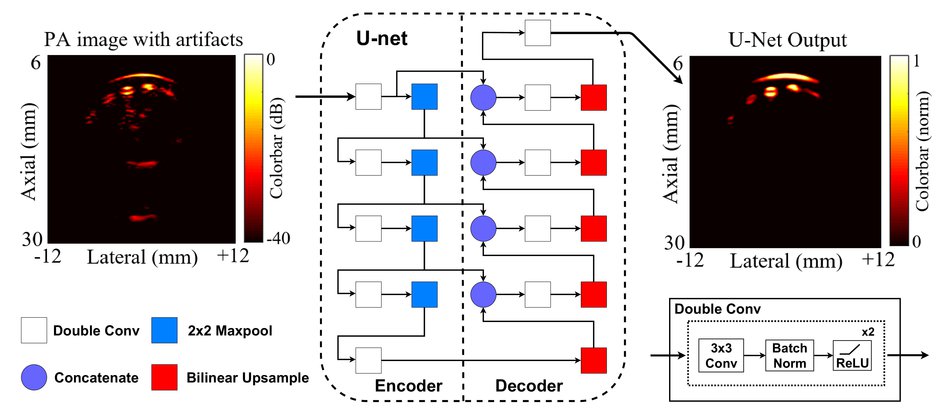

A Deep Learning Approach to Reflection Artifacts Reduction in Photoacoustic Imaging

Reflection of photoacoustic (PA) signals from strong acoustic heterogeneities in biological tissue leads to reflection artifacts (RAs) in B-mode PA images. In practice, RAs often clutter clinically obtained PA images, making the interpretation of these images difficult in the presence of hypoechoic or anechoic biological structures. Towards PA artifact removal, several researchers have exploited 1) the frequency/spectrum content of time-series photoacoustic data in order to separate the true signal from artifacts, and 2) the multi-wavelength response of photoacoustic targets, assuming that the spectral nature of RAs correlates well with their corresponding source signals. These approaches are limited to extensive offline processing and sometimes fail to correctly identify artifacts in deep tissue. This study demonstrates the use of a deep neural network with the U-Net architecture to detect and reduce RAs in B-mode PA images.

Figure below: The architecture of the U-net trained to reduce artifacts in input cross-sectional photoacoustic finger images. White Square: Two sequential 3x3 2D convolutions each followed by batch normalization and a ReLU non-linearity (in text: Double Conv). Blue Square: A 2x2 max pool operation with stride 2. Red Square: A bilinear up-sampling operation with scale-factor 2. Purple Circle: A concatenation operation that concatenates feature maps from the previous layer of the decoder with feature maps of the same size from the encoder.

Figure below: The architecture of the U-net trained to reduce artifacts in input cross-sectional photoacoustic finger images. White Square: Two sequential 3x3 2D convolutions each followed by batch normalization and a ReLU non-linearity (in text: Double Conv). Blue Square: A 2x2 max pool operation with stride 2. Red Square: A bilinear up-sampling operation with scale-factor 2. Purple Circle: A concatenation operation that concatenates feature maps from the previous layer of the decoder with feature maps of the same size from the encoder.

Publications:

- Agrawal, S., Johnstonbaugh, K., Suresh, T., Garikipati, A., Singh, M.K.A., Karri, S.P.K. and Kothapalli, S.R., 2021, March. In vivo demonstration of reflection artifact reduction in LED-based photoacoustic imaging using deep learning. In Photons Plus Ultrasound: Imaging and Sensing 2021 (Vol. 11642, p. 116421K). International Society for Optics and Photonics. doi: https://doi.org/10.1117/12.2579082

Multi-Modal Imaging Platform combining Ultrasound, Photoacoustics, Doppler and Elastography

This work presents development of a comprehensive diagnostic platform integrating four state-of-the-art non-invasive biomedical imaging techniques in real-time. B-mode ultrasound, photoacoustic imaging provide anatomical, molecular contrast. Whereas, Doppler and shear wave elastography provide blood flow, tissue stiffness information, all collectively aiding the diagnosis and staging of cancer.

Figure below: Design and execution process of the imaging sequence using our multi-modal imaging platform. All imaging modules here are uniquely color-coded for better visualization of them for the rest of the paper.

Figure below: Design and execution process of the imaging sequence using our multi-modal imaging platform. All imaging modules here are uniquely color-coded for better visualization of them for the rest of the paper.

Publications:

- Agrawal, S., Sankhe, C., et al. Development of a Multi-Modal Imaging Platform combining Ultrasound, Photoacoustic, Doppler and Elastography for Prostate Cancer Diagnosis. IEEE Transactions on Medical Imaging (In review).

Development of a Clinical Transrectal US and PA Device

The standard diagnostic procedure for prostate cancer (PCa) is transrectal ultrasound (TRUS)-guided needle biopsy. However, due to the low sensitivity of TRUS to cancerous tissue in the prostate, small yet clinically significant tumors can be missed. A real-time and intraprocedural imaging modality that can sensitively detect PCa tumors and, more importantly, differentiate aggressive from nonaggressive tumors could largely improve the guidance of biopsy sampling to improve diagnostic accuracy and patient risk stratification. In this work, we seek to fill this long-standing gap in clinical diagnosis of PCa via the development of a dual-modality imaging device that integrates the emerging photoacoustic imaging (PAI) technique with the established TRUS for improved guidance of PCa needle biopsy.

Figure below: (a) Shows a conventional approach for integrating an optical source with a TRUS probe with no light-bending mechanism. (b) Shows another conventional method adopted for reflecting the optical beams around the TRUS probe using two mirrors, leading to a stand-off of ~6–8 mm. (c) Shows the obtained optical path with the novel proposed design using two acrylic lenses integrated with the probe, leading to an optical focal spot at ~12 mm and eliminating the stand-off problem. (d–f) Show the optical fluence profile images captured experimentally with the novel TRUSPA device at ~7 mm, ~12 mm, and ~18 mm distance from the surface of the probe with 690 nm optical wavelength excitation. (g) Photoacoustic field-of-view (FOV) measurements made with a 0.3 mm pencil lead target in 20 cm−1 reduced optical scattering medium.

Figure below: (a) Shows a conventional approach for integrating an optical source with a TRUS probe with no light-bending mechanism. (b) Shows another conventional method adopted for reflecting the optical beams around the TRUS probe using two mirrors, leading to a stand-off of ~6–8 mm. (c) Shows the obtained optical path with the novel proposed design using two acrylic lenses integrated with the probe, leading to an optical focal spot at ~12 mm and eliminating the stand-off problem. (d–f) Show the optical fluence profile images captured experimentally with the novel TRUSPA device at ~7 mm, ~12 mm, and ~18 mm distance from the surface of the probe with 690 nm optical wavelength excitation. (g) Photoacoustic field-of-view (FOV) measurements made with a 0.3 mm pencil lead target in 20 cm−1 reduced optical scattering medium.

Publications:

- Agrawal, S., Johnstonbaugh, K., Clark, J.Y., Raman, J.D., Wang, X. and Kothapalli, S.R., 2020. Design, Development, and Multi-Characterization of an Integrated Clinical Transrectal Ultrasound and Photoacoustic Device for Human Prostate Imaging. Diagnostics, 10(8), p.566. doi: https://doi.org/10.3390/diagnostics10080566

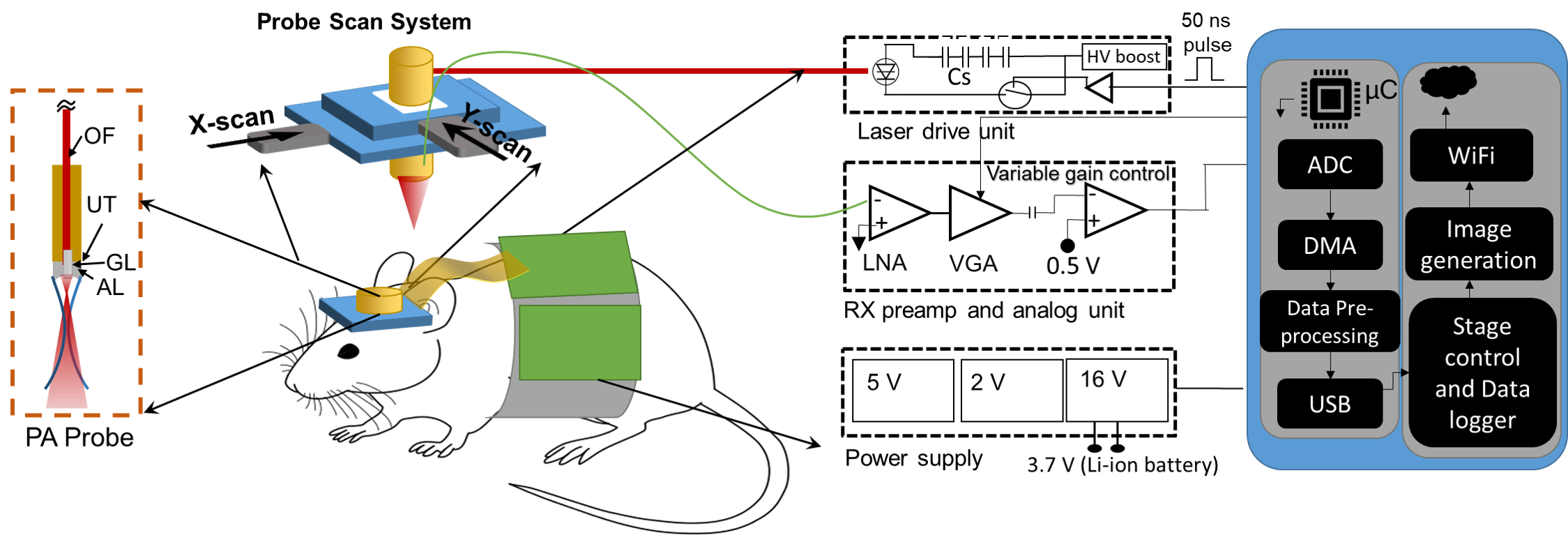

Novel LED-Based PA Computed Tomography System

Photoacoustic computed tomography (PACT) has been widely explored for non-ionizing functional and molecular imaging of humans and small animals. In order for light to penetrate deep inside tissue, a bulky and high-cost tunable laser is typically used. Light-emitting diodes (LEDs) have recently emerged as cost-effective and portable alternative illumination sources for photoacoustic imaging. In this study, we have developed a portable, low-cost, five-dimensional (x, y, z, t, λ) PACT system using multi-wavelength LED excitation to enable similar functional and molecular imaging capabilities as standard tunable lasers.

Figure below: Light Emitting Diodes (LED) based photoacoustic computed tomography (PACT) system. (a) The AcousticX system for combined photoacoustic/ultrasound (PA/US) data acquisition. (b) Typical arrangement of the two LED arrays for B-mode PA/US imaging. (c) Picture of an LED array. (d-g) Experimental setup for 3-D volumetric LED based PACT system.

Figure below: Light Emitting Diodes (LED) based photoacoustic computed tomography (PACT) system. (a) The AcousticX system for combined photoacoustic/ultrasound (PA/US) data acquisition. (b) Typical arrangement of the two LED arrays for B-mode PA/US imaging. (c) Picture of an LED array. (d-g) Experimental setup for 3-D volumetric LED based PACT system.

Publications:

- Agrawal, S., Fadden, C., Dangi, A., Yang, X., Albahrani, H., Frings, N., Heidari Zadi, S. and Kothapalli, S.R., 2019. Light-emitting-diode-based multispectral photoacoustic computed tomography system. Sensors, 19(22), p.4861. doi: https://doi.org/10.3390/s19224861

- Agrawal, S., Singh, M.K.A., Yang, X., Albahrani, H., Dangi, A. and Kothapalli, S.R., 2020, February. Functional, molecular and structural imaging using LED-based photoacoustic and ultrasound imaging system. In Photons Plus Ultrasound: Imaging and Sensing 2020 (Vol. 11240, p. 112405A). International Society for Optics and Photonics. doi: https://doi.org/10.1117/12.2547048

- Agrawal, S., Yang, X., Albahrani, H., Fadden, C., Dangi, A., Singh, M.K.A. and Kothapalli, S.R., 2020, February. Low-cost photoacoustic computed tomography system using light-emitting-diodes. In Photons Plus Ultrasound: Imaging and Sensing 2020 (Vol. 11240, p. 1124058). International Society for Optics and Photonics. doi: https://doi.org/10.1117/12.2546993

- Agrawal, S., Kuniyil Ajith Singh, M., Johnstonbaugh, K., C Han, D., R Pameijer, C. and Kothapalli, S.R., 2021. Photoacoustic Imaging of Human Vasculature Using LED versus Laser Illumination: A Comparison Study on Tissue Phantoms and In Vivo Humans. Sensors, 21(2), p.424. doi: https://doi.org/10.3390/s21020424

A Wearable PA imaging system using Ring Transducer

Several breakthrough applications in biomedical imaging have been reported in the recent years using advanced photoacoustic microscopy imaging systems. While two-photon and other optical microscopy systems have recently emerged in portable and wearable form, there is much less work reported on the portable and wearable photoacoustic microscopy (PAM) systems. Working towards this goal, we report our studies on a low-cost and portable photoacoustic microscopy system that uses a custom fabricated 2.5 mm diameter ring ultrasound transducer integrated with a fiber-coupled laser diode.

Figure below: An illustration of a miniaturized wearable photoacoustic microscope for behaving mice brain imaging, using a ring ultrasound transducer and a fiber coupled laser diode.

Figure below: An illustration of a miniaturized wearable photoacoustic microscope for behaving mice brain imaging, using a ring ultrasound transducer and a fiber coupled laser diode.

Publications:

- Dangi, A., Agrawal, S., Datta, G.R., Srinivasan, V. and Kothapalli, S.R., 2019. Towards a low-cost and portable photoacoustic microscope for point-of-care and wearable applications. IEEE Sensors Journal, 20(13), pp.6881-6888. doi: 10.1109/JSEN.2019.2935684

Fast Coding Tree Unit (CTU) Splitting Algorithm based on Dynamic Depth Prediction for High Efficiency Video Coding (HEVC)

About HEVC: Is the current video coding standard, developed in partnership - known as Joint Collaborative Team on Video Coding (JCT-VC), of International Standards Organization / International Electro-Technical Commission Moving Picture Experts Group (MPEG) and International Telecommunication Union - Telecommunication Standardization Sector Video Coding Experts Group (VCEG), aims at significant improvement in the compression performance.

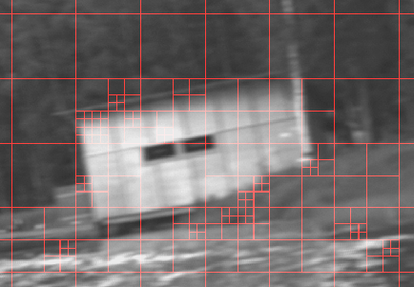

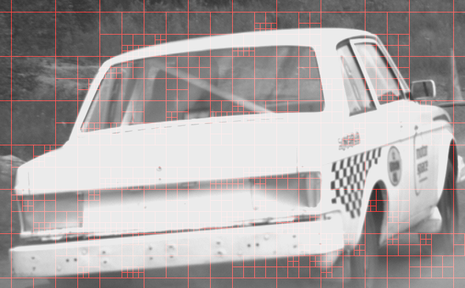

Our Contribution: Tree-Structured Coding Unit (CU) approach adopted in HEVC allows the recursive splitting of a CTU into four equally sized blocks. At each block size, 35 intra prediction modes are evaluated for the rate-distortion optimization. This is a key factor for improved compression performance which also leads to a very high computational complexity. To reduce the computational complexity, we proposed a new method for fast CTU split which utilizes information from the neighbor CTUs to predict the depth of current CTU dynamically and thus take the decision for current CTU split. This reduces the computations in splitting process by 65 - 70% with accuracy in split decision of approximately 95%. Sample splitting of two frames using our approach is as shown in the figures below:

Our Contribution: Tree-Structured Coding Unit (CU) approach adopted in HEVC allows the recursive splitting of a CTU into four equally sized blocks. At each block size, 35 intra prediction modes are evaluated for the rate-distortion optimization. This is a key factor for improved compression performance which also leads to a very high computational complexity. To reduce the computational complexity, we proposed a new method for fast CTU split which utilizes information from the neighbor CTUs to predict the depth of current CTU dynamically and thus take the decision for current CTU split. This reduces the computations in splitting process by 65 - 70% with accuracy in split decision of approximately 95%. Sample splitting of two frames using our approach is as shown in the figures below:

Rate Control Scheme for High Resolution H.264 Video Conference

Motivation: Live video streaming, high definition video conferencing applications are becoming popular today. These applications are bandwidth intensive and demand an efficient compression algorithm as well as management of available bandwidth. By varying the control parameters in the encoder, we can gain control over the encoded bit-stream such that the application does not overshoot the available bandwidth.

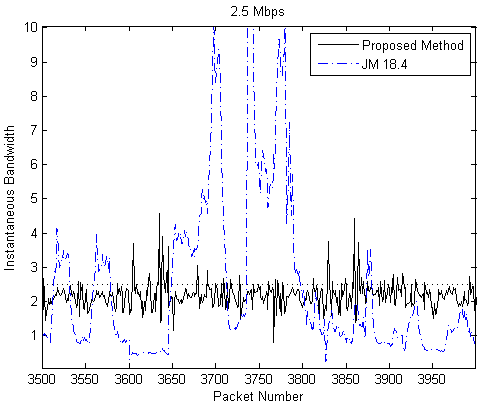

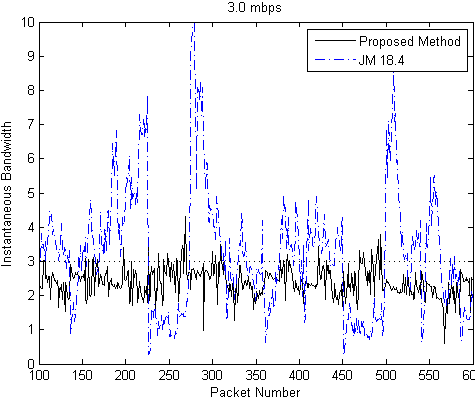

Our Contribution: We have proposed an efficient low complexity slice level rate control method, suitable for high resolution interactive video conferencing system. The proposed method minimizes instantaneous bit rate fluctuations for variable data rate produced by the encoder. For the proposed rate control scheme, instantaneous rate measurements at every packet sending interval shows that almost 95% of the samples are successfully restricted within 5% of specified rate. In comparison with the H.264 reference software JM 18.4, our method proves superiority in burstiness minimization, as can be seen in the plots below for the specified bandwidths of 2.5 Mbps and 3.0 Mbps:

Publications:

Our Contribution: We have proposed an efficient low complexity slice level rate control method, suitable for high resolution interactive video conferencing system. The proposed method minimizes instantaneous bit rate fluctuations for variable data rate produced by the encoder. For the proposed rate control scheme, instantaneous rate measurements at every packet sending interval shows that almost 95% of the samples are successfully restricted within 5% of specified rate. In comparison with the H.264 reference software JM 18.4, our method proves superiority in burstiness minimization, as can be seen in the plots below for the specified bandwidths of 2.5 Mbps and 3.0 Mbps:

Publications:

- Bhattacharyya, S., Agrawal, S., Jeevani, A. and Sengupta, S., 2014. Burstiness minimized rate control for high resolution H. 264 video conferencing. In 2014 Twentieth National Conference on Communications (NCC) (pp. 1-6). IEEE. doi: 10.1109/NCC.2014.6811332